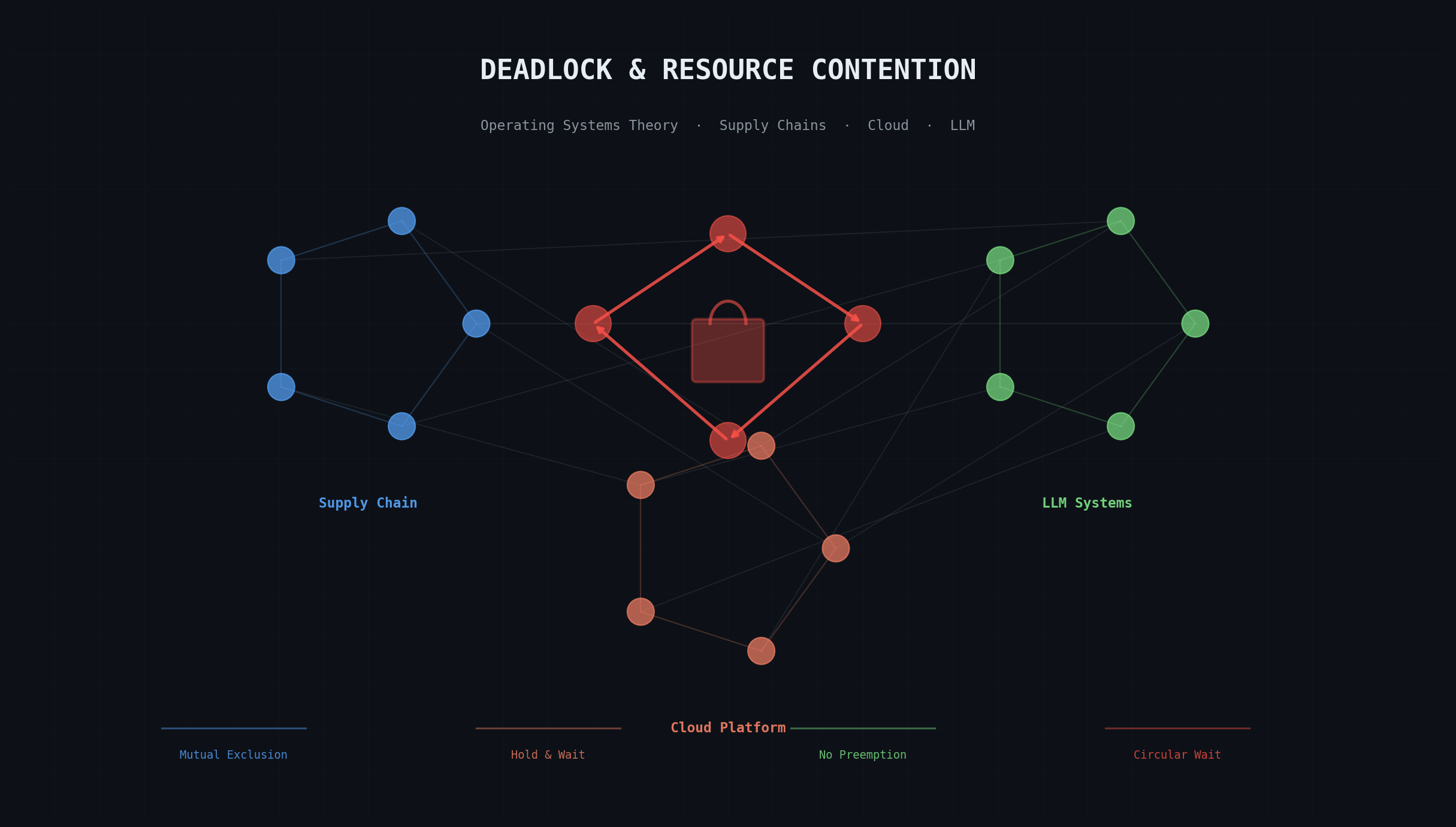

Deadlock requires four simultaneous conditions: mutual exclusion, hold and wait, no preemption, and circular wait. These Coffman conditions surface in supply chain attacks, cloud platform fragmentation, and LLM inference starvation as readily as in operating system threads. Ten engineering lessons convert the theory into prevention and recovery controls.

Introduction

Four conditions. All four present simultaneously, and the system stops. Permanently. The Coffman conditions: mutual exclusion, hold and wait, no preemption, circular wait. These are the necessary and sufficient skeleton of deadlock, and they do not care whether the resources in question are memory pages, npm credentials, cloud billing regions, or GPU inference slots.

Consider what happened in March 2026: malicious axios package versions cascaded through npm because a compromised credential created textbook hold-and-wait, where each build held a lock on developer identity while waiting for upstream package access (reconstructed in Axios npm supply-chain compromise). Or consider SaaS bifurcation in China from 2019 onward, where service withdrawal introduced circular dependencies no single vendor could unilaterally resolve (examined in digital sovereignty and fragmented cloud realities). Or LLM inference scheduling, where priority-flat token-slot allocation starves lower-priority jobs indefinitely (the architectural lineage traced in large language models in practice).

This article reconstructs these three domains — supply chain security, platform operations, and AI systems — through concurrency theory. Not as metaphor. As diagnostic structure. Map incident patterns onto Coffman’s conditions and classical scheduling algorithms, and something striking emerges: decades of OS mitigation strategies transfer almost intact to domains where contention is typically shrugged off as inevitable rather than dismantled through deliberate engineering. [1] This article is not legal advice.

Evidence Scope and Claim Boundaries

This article combines three claim classes to preserve analytical precision. The supply-chain section is incident-confirmed and anchored to a timestamped compromise with independently corroborated technical artifacts. The cloud-platform section uses documented policy and operating-model evidence, and it also includes illustrative composite continuity flows where no single outage report captures the full dependency cycle. The LLM section is pattern-driven and models recurrent production scheduling behavior rather than attributing deadlock risk to one named 2026 outage. This separation keeps the framework operational without overstating evidentiary certainty.

Core Concepts

- Deadlock

- A state in which two or more processes are permanently blocked, each waiting for a resource held by another, with no possibility of progress without external intervention.

- Resource contention

- A condition in which multiple processes or threads compete for the same limited resource, leading to queuing, performance degradation, or blocking.

- Starvation

- A scheduling failure in which a process is indefinitely denied access to a resource it needs, even though the resource becomes available to other processes.

- Priority inversion

- A concurrency anomaly in which a high-priority task is blocked by a lower-priority task that holds a required resource, while medium-priority tasks run ahead of both.

- Livelock

- A condition in which processes continuously change state in response to each other without making progress, unlike deadlock where processes are completely idle.

Understanding Deadlock: Mutex, Semaphore, and the Coffman Conditions

Mutual Exclusion and the Locking Primitive

A mutex (mutual exclusion lock) enforces a blunt promise: exactly one thread holds a resource at a time. Period. In the kernel, this prevents data corruption when multiple CPUs compete for the same memory location. The analogous primitive surfaces everywhere, though people rarely call it by name. In supply chains, it is credential ownership — exactly one maintainer should hold the right to publish to a package. In cloud platforms, region-scoped control: one legal entity holds billing and operational authority for a geographic zone. In LLM inference, token-slot allocation during the critical encoding-decoding phase.

Mutual exclusion is not optional. Strip it away and concurrent modification produces data loss, authorization confusion, inference failures — pick your catastrophe. But enforcement carries its own cost: contention. Threads queue. The queue grows. Does it grow efficiently, or does it lock the entire system?

Semaphores: Counter-Based Access Control

A semaphore generalizes mutual exclusion from one resource to N identical copies. Edsger Dijkstra invented the construct in 1962–1963 while building the THE multiprogramming system at Eindhoven — an era when a single mainframe served an entire university. Initialize a semaphore to N and the first N threads sail through; the (N+1)th waits. If that (N+1)th thread waits forever? That is starvation: a failure state where access is denied indefinitely even though the resource keeps becoming available to others.

Tanenbaum and Bos (2015) stress that modern operating systems coordinate locks, schedulers, and memory managers across multicore processors and virtualized workloads. The deadlock model remains the same, but the operational impact grows because one blocked path can cascade across all dependent execution contexts.

Think of maintainer credentials as semaphores with N=1. When the axios maintainer’s credential was compromised in 2026, normal package publication became a blocked operation for every downstream consumer — thousands of builds, stalled. In cloud regions, semaphores represent compute capacity; exhaust a region’s data-processing quota with one runaway application and every other application starves. LLM inference pipelines work the same way. Token-slot semaphores gate parallelism, and if higher-priority requests monopolize every slot, lower-priority jobs wait. Indefinitely.

The Four Coffman Conditions: Structure of Deadlock

Edward G. Coffman, Jr., Michael J. Elphick, and Arie Shoshani formalized the four necessary and sufficient conditions for deadlock in their 1971 paper “System Deadlocks” (ACM Computing Surveys, 3(2), pp. 67-78). The conditions, now known as the Coffman conditions, require all four to hold simultaneously:

- Mutual Exclusion: Resources cannot be simultaneously held by multiple threads.

- Hold and Wait: A thread holding one resource can request another without releasing the first.

- No Preemption: The system cannot forcibly reclaim a resource from a thread that holds it.

- Circular Wait: There exists a cycle in the resource-request graph such that T₁ waits for a resource held by T₂, T₂ waits for a resource held by T₃, …, Tₙ waits for a resource held by T₁.

Breaking any one of these four conditions prevents deadlock. This is not abstract. It is a universally applicable design constraint. Operating systems prevent deadlock by enforcing one of: (a) breaking isolation to disallow mutual exclusion (not viable for security), (b) forcing resource release before requesting new ones (Banker’s algorithm), (c) allowing preemption (priority interrupts), or (d) imposing a total order on resource acquisition (resource ordering).

Supply Chain Incident: Circular Wait in Credential Queues

The axios npm compromise of March 2026 exhibits all four Coffman conditions in a credential-management system. The full incident reconstruction and provenance-trust lessons are detailed in the companion supply-chain article.

Timeline and Mechanics

Between 30 and 31 March 2026, malicious versions of axios (1.14.1 and 0.30.4) appeared on npm and propagated through automatic dependency resolution. The attack chain combined social engineering to compromise a maintainer account, then used that credential to inject a counterfeit dependency (plain-crypto-js@4.2.1) into axios source repositories. During installation, the injected dependency executed obfuscated code that staged payloads to macOS, Windows, and Linux platforms and communicated with C2 infrastructure at sfrclak[.]com:8000.

The incident pattern reveals hold-and-wait behavior — textbook, almost uncomfortably clean. A developer building a project holds the right to use their development environment. That developer then waits for npm to return requested packages. During that wait, if the registry has been compromised, the developer’s environment is exposed to malicious execution. Here is the trap: developers cannot easily revoke their own build-environment access (no preemption), and multiple developers wait for the same package through circular dependency chains. One compromised credential propagates through the entire coordination system. What looks like a supply-chain attack is, structurally, a four-condition deadlock playing out across organizational boundaries.

Mapping to Coffman Conditions

Mutual Exclusion. Exactly one entity (the registered maintainer) holds authority to publish new versions to npm. This mutual exclusion is correct. The problem is not the lock; it is the conditions under which it is held.

Hold and Wait. The maintainer holds the publish credential while the build system waits for package availability. If the maintainer is compromised through social engineering, the malicious actor now both holds the credential and controls what code is released. Developers downstream are forced to wait for those releases.

No Preemption. Once a package version is published to npm, the npm registry cannot unilaterally revoke it from every building system that has already downloaded it. There is no preemption mechanism to say “stop using version 1.14.1 immediately, everywhere.” Takedown is manual and slowest-propagating host by host.

Circular Wait. A developer’s build waits for axios. Axios depends on other packages. Those packages may have their own dependencies. If one transitive dependency is compromised, the build-dependency graph now contains a backdoor. A team that depends on a downstream project that depends on axios is now waiting for axios indirectly. The wait cycle is implicit in the dependency graph.

Consequences and Episodic Nature

The incident was detected and the malicious versions removed from npm within hours. Yet the circular-wait structure ensured that systems that had already downloaded the malicious packages remained affected. Incident response teams had to hunt every system that resolved the bad version during the exposure window. The no-preemption property meant that no single action could stop in-flight exploitation. Each host required active intervention.

From a deadlock prevention perspective, this incident exemplifies the failure to break the circular wait condition. Package managers enforce dependency order (topological sort), yet they do not prevent transitive hold and wait cycles. Some package managers support version pinning and lockfile practices, but adoption remains voluntary.

Platform Fragmentation: Circular Dependencies in Regional Control

Cloud platform operations present a second recursive pattern. When foreign vendors enter jurisdictions with regulatory sovereignty requirements, they often partition global service delivery into region-scoped operating units. Microsoft’s Azure through 21Vianet (China), Salesforce through Alibaba Cloud (China), and Unity’s region-specific engine paths show this pattern. The policy and engineering dimensions of this fragmentation are examined in digital sovereignty and cloud access fragmentation. The resulting architecture embeds hold-and-wait cycles at the platform level.

Data Residency as a Holding Pattern

A platform entering China must hold data within China — regulation demands it. The platform also holds responsibility for service continuity, and it waits for the regional partner to deliver operational capability. Now consider what happens when the regional partner is later required to block access to the platform for policy reasons. Neither party can unilaterally resolve the impasse. The global platform holds data custody. The region holds operational control. Neither can preempt the other. Classic deadlock, except the “threads” are multinational corporations and the “scheduler” is a regulatory apparatus with no rollback mechanism.

From 2025 to 2026, this manifested acutely in developer tooling. Unity announced withdrawal of regional access to Unity 6 and the Asset Store for mainland China, Hong Kong, and Macau. The withdrawal was not instantaneous preemption. It was staged notification, followed by account lockout, followed by blocked features. The no-preemption principle held: once a developer’s project was bound to Unity infrastructure in an affected region, that developer could not preempt the withdrawal decision. Similarly, Unity could not preempt regional regulatory constraints.

Circular Dependencies in Business Continuity

A Salesforce customer in China relies on Salesforce through Alibaba Cloud. Alibaba Cloud holds infrastructure control. Salesforce holds product responsibility. If a policy change requires one party to withdraw, the other cannot preempt the exit. A customer waiting for Salesforce finds Salesforce waiting for Alibaba, which is waiting for regulatory clearance to continue operations. The circular wait does not lock the system instantaneously; it plays out over months as businesses realize their continuity assumptions no longer hold. Contracts specify service levels, but no contract can override political risk. This continuity description is an illustrative composite model grounded in documented sovereign-cloud operating structures rather than a single outage timeline.

Starvation in Communication Channels

Fragmented control produces a quieter failure: starvation in alert and escalation pathways. Atlassian’s Opsgenie documentation notes that SMS delivery to China faces telecom-level blocking. A customer in China relying on Opsgenie for incident alerting cannot receive SMS notifications reliably. The alert system holds the customer’s configuration; the customer’s telco holds SMS delivery. Neither can preempt the blockage. Notifications are generated, dispatched, and then — nothing. They vanish into a telecom void.

By the OS definition, this is starvation in its purest form. A resource (notification delivery) is repeatedly denied to a requesting thread (the incident responder) even though that same resource is being allocated to requesters in other regions without interruption.

LLM Inference and Token Schedulers: Priority Starvation

Modern LLM inference introduces a third deadlock pattern specific to token-generation scheduling. The transformer architecture and attention mechanisms that underpin these inference pipelines are examined in large language models in practice. The analysis in this section is intentionally pattern-based: it models known scheduler behavior in production inference systems without claiming one canonical named outage as the sole evidence anchor.

The Token Slot as a Bottleneck Resource

When an LLM processes inference requests, it converts input text into tokens and generates output tokens one at a time — autoregressive generation, where each token requires a full forward pass through the model. The bottleneck is the decoding loop. On resource-constrained systems (GPUs with finite memory, inference clusters with limited parallelism), the number of concurrent decoding operations is bounded. That bound is the token slot.

Token slots are mutual-exclusion resources. One request per slot during decoding. Full slots mean new requests wait. So far, straightforward. But add priorities, and the dynamics shift dramatically: a low-priority request can be starved indefinitely by a continuous stream of higher-priority arrivals, each one jumping the queue before the waiting request ever reaches the front.

Starvation Under Priority Unfairness

Picture an LLM inference system serving both interactive (user-facing) and batch (offline) requests, with priority allocated to interactive traffic. As long as interactive requests arrive faster than they complete, batch requests wait. And wait. And wait. The batch-scheduling system holds a queue entry; the inference scheduler waits for slot availability — but never allocates one to the batch request because higher-priority interactive requests keep arriving. This is starvation, playing out in milliseconds rather than the months of a cloud-platform deadlock.

Defenses exist, borrowed directly from OS schedulers. Priority aging: as a thread waits longer, its effective priority ticks upward. After T seconds, a low-priority batch request rises high enough to compete with interactive traffic. Round-robin scheduling gives each request a time slice regardless of initial priority — fair, but it sacrifices latency for interactive users. First-come-first-serve is also fair, though it risks turnaround-time inversion when small interactive requests stack behind large batch jobs. No single policy is perfect; the right choice depends on whether your system optimizes for throughput or responsiveness.

Ordering and Deadlock Avoidance

A deadlock could emerge in an LLM system serving multiple models. Request A holds a slot on Model-X and waits for Model-Y. Request B holds a slot on Model-Y and waits for Model-X (for example, in a multi-step reasoning pipeline where one model’s output feeds into another). If both models have bounded concurrent-access limits, a deadlock can form. Mitigation requires either (1) allowing preemption (kill one request to free slots), (2) enforcing a resource-acquisition order globally (always acquire Model-X before Model-Y), or (3) breaking the wait cycle through admission control (reject Request B if it would create a cycle).

Actionable Lessons: Breaking the Cycle

Lesson 1: Make Circular Wait Visible Through Static Resource Ordering

Deadlock is most easily prevented by enforcing a total order on resource acquisition, a technique demonstrated by Dijkstra’s resource hierarchy solution to the dining philosophers problem. This applies uniformly across domains.

In supply chains: Maintain a dependency graph that enforces acyclic resolution. Implement cycle detection during package ingestion. Block any transitive dependency that would create a cycle.

In cloud platforms: Define a canonical resource-allocation order. For example, acquire jurisdiction controls before regional partnerships. Allocate data-custody rights before operational responsibilities. This prevents the held-and-wait cycle.

In LLM systems: If a multi-step pipeline stages requests through multiple models, enforce a pre-declared model ordering. Never allow Request A to hold Model-X while waiting for Model-Y if Request B already holds Model-Y and is waiting for Model-X.

Actionable control: Implement static topology analysis. Detect all cycles. Block configurations that would permit cycles.

Lesson 2: Break Hold-and-Wait Through Mandatory Atomicity or Full Release

Operating systems offer two ways to prevent hold-and-wait. The Banker’s algorithm (Dijkstra, c. 1965; EWD-108) requires threads to declare all resources needed in advance and either acquire all atomically or none. The alternative is to force threads to release all held resources before requesting new ones.

Supply chains: Require package publishers to declare all transitive dependencies before incrementing version numbers. If a new dependency would violate the pre-declared set, reject the publication. This is atomic acquisition at a global level.

Cloud platforms: Segment lifecycle stages. A platform entering a region must complete Data Residency Setup before accepting Service Responsibility. Intermediate states where both are held but incomplete are forbidden through policy gates.

LLM systems: In a multi-model pipeline, require Request A to fully complete on Model-X and release all slots before moving to Model-Y. Pipeline requests in sequence; disallow overlapping holds across models.

Actionable control: Implement atomic-transaction semantics for resource acquisition at each level. Disallow partial holds.

Lesson 3: Enforce Preemption for Critical Resources

The third Coffman condition is no preemption. Breaking it means authorizing the system to forcibly reclaim resources under certain conditions.

Supply chains: Implement certificate-revocation mechanisms that can immediately invalidate a compromised maintainer credential. Tools like the SLSA framework enable this by decoupling maintainer identity from deployment authority. The relationship between provenance attestation and supply-chain trust is explored further in the article on data provenance in the ML lifecycle. A compromised credential does not automatically grant package publication rights; the revocation system can preempt the right in real time.

Cloud platforms: Build fallback mechanisms for region-specific service delivery. If a region’s operational partner becomes unavailable, preempt the regional partnership and fail over to a global-federated model. Data residency constraints are real, but preemption of stalled operational models is essential.

LLM systems: Implement priority interruption. When a latency-critical request arrives and no slots are free, preempt a lower-priority batch job, save its state, and resume it later. This breaks the starvation cycle.

Actionable control: Design preemption policies explicitly. Define when and how resources can be forcibly reclaimed. Test preemption pathways regularly.

Lesson 4: Apply Priority Aging to Prevent Starvation

Priority-flat scheduling (round-robin, FCFS) ensures no process starves, but can sacrifice latency for high-priority work. Pure priority scheduling maximizes responsiveness but can starve low-priority jobs indefinitely. Priority aging blends both: as a request waits, its effective priority increases over time, ensuring eventual access.

Supply chains: Implement tiered patch-release schedules. Critical security fixes (high initial priority) deploy immediately. Routine updates (lower initial priority) deploy with a time-window limit. After 48 hours, a routine update’s priority increases, ensuring rollout completion even if newer criticals arrive.

Cloud platforms: For user-access restoration in regions experiencing connectivity issues, implement aging: a user-restore request blocked for time T has its priority increased, eventually surpassing normal requests if still pending.

LLM systems: Track request wait time in the inference queue. After waiting for time T, a batch request’s priority is increased by a fixed increment. This ensures batch requests eventually execute even if interactive requests arrive continuously.

Actionable control: Implement wait-time tracking. Define priority-aging policies. Measure and alert when low-priority requests exceed maximum-wait thresholds without aging applied.

Lesson 5: Use Mutex and Semaphore Abstractions Correctly

A mutex enforces exactly-one access. A semaphore with N permits up to N concurrent accesses. Misusing these primitives causes contention where none was necessary.

Supply chains: Treat package-version credentials as semaphores, not mutexes. Multiple build systems should be able to read the same package version concurrently. Only publication requires mutual exclusion. Current npm design satisfies this. Problems arise when the semaphore count is set too low (e.g., publishing is rejected if any older version is still in resolution).

Cloud platforms: Treat regional compute capacity as semaphores. A region’s semaphore count represents concurrently-serviceable workloads. If regional capacity is 100 concurrent sessions and your application uses 101, the 101st starves indefinitely. Adding capacity (increasing the semaphore count) or implementing round-robin admission reduces starvation.

LLM systems: Token slots are semaphores. If you set the token-slot count to 1 (serializing all inference), you bottleneck throughput. If you set it to N without load-balancing, you overcommit memory. Calibrate the semaphore count to system capacity with headroom for safety.

Actionable control: Audit all mutual-exclusion and semaphore usage. Verify counts match actual resource capacity. Test under overload.

Lesson 6: Implement First-Come-First-Serve (FCFS) or Round-Robin for Fair Scheduling

When a resource has multiple waiters and no explicit priority exists, FCFS and round-robin scheduling guarantee no process starves. The trade-off is average latency under bursty loads.

Supply chains: Apply FCFS to package-download queues during high-load events (major release days). This prevents scenarios where some developers’ builds complete while others starve due to implementation-specific queueing.

Cloud platforms: Use round-robin for API-call rate limiting. Instead of first-come-first-serve (which can delay later requests indefinitely if early ones are slow), round-robin ensures each caller gets periodic access regardless of request size.

LLM systems: For inference queues without priority tiers, implement round-robin token scheduling. Each request gets a fixed time slice or token budget. This ensures low-priority batch jobs are not starved by continuous interactive arrivals.

Actionable control: Define scheduling policy explicitly. Auto-generate per-system scheduling audits. Alert if starvation thresholds are exceeded.

Lesson 7: Treat Deadlock as Inevitable and Implement Detection Plus Recovery

If breaking all four Coffman conditions is infeasible (which it often is in real systems), implement deadlock detection and recovery.

Supply chains: Monitor package-publication queues. If a maintainer’s credential has been held for longer than a policy-defined timeout without completing a publication event, flag the credential as potentially compromised and initiate credential revocation. This is deadlock detection (timeout) plus recovery (revocation).

Cloud platforms: Monitor regional partnerships. If a region’s operational partner has not acknowledged a heartbeat for time T, trigger fallback mechanisms. This detects the circular-wait deadlock (region waiting for partner, partner waiting for policy clearance) and recovers by breaking the circle.

LLM systems: Monitor request queues. If a request has not completed within a timeout and all token slots are occupied by requests that also cannot progress, deadlock is detected. Recovery is to preempt one request and retry.

Actionable control: Implement timeouts, heartbeats, and deadlock-detection dashboards. Define recovery procedures in advance. Test recovery playbooks regularly.

Lesson 8: Use Spooling and Indirection to Decouple Resource Producers from Consumers

Spooling is an operating-systems technique where producers write to a temporary intermediate resource (spool), and consumers read from the spool. This breaks direct hold-and-wait by introducing an indirection layer.

Supply chains: Implement package mirrors and caches. Developers do not directly publish to npm; they publish to a private mirror that decouples their credential from the public registry. The mirror runs a scheduled sync with npm, decoupling the timing of publication from the timing of dependency resolution.

Cloud platforms: Use message queues (e.g., Azure Service Bus) to decouple application requests from regional compute resources. Applications write requests to a queue. Regional processors consume from the queue. If a region is unavailable, requests spool; they do not block the application or hold the application’s credentials.

LLM systems: Buffer inference requests in a queue rather than directly allocating tokens. The queue acts as a spool. This decouples request submission (which does not hold tokens) from token allocation (which does). Requests can be prioritized, rerouted, or reimplemented later without held resources.

Actionable control: Identify critical hold-and-wait patterns. Introduce spool layers to decouple producers and consumers.

Lesson 9: Engineer Contingency Through Resource Redundancy and Failover

Deadlock prevention is ideal but not always achievable. Resilience engineering accepts deadlock as possible and minimizes impact through redundancy.

Supply chains: Maintain redundant package mirrors in multiple registries. If npm experiences a supply-chain deadlock event, applications can fall back to alternative mirrors. This does not prevent the deadlock; it makes the deadlock survivable.

Cloud platforms: Offer multi-region deployments with explicit cross-region fallback. If one region experiences a hold-and-wait deadlock (waiting for a partner that has become unavailable), applications can failover to another region that lacks the same constraint.

LLM systems: Deploy the same model across multiple inference clusters. If one cluster experiences token-slot deadlock, requests can be rerouted to another cluster. This distributes deadlock risk across independent resources.

Actionable control: Identify single points of deadlock. Provision redundancy. Automate failover. Test failover paths under load.

Lesson 10: Make Resource Ownership and Accountability Explicit

Hidden resource ownership breeds hidden deadlock. When it is unclear who holds a resource or who has authority to release it, deadlock recovery becomes difficult.

Supply chains: Maintain a canonical registry of package-maintainer identities and credential lifecycles. When a maintainer changes (person leaves, team reorganization), explicitly revoke old credentials and issue new ones. Document transitions. This prevents scenarios where a credential is held but no one knows who should revoke it if compromise occurs.

Cloud platforms: Publish responsibility matrices that show which entity (global vendor, regional partner, customer) owns each resource dimension: data, operations, compliance, escalation. Ambiguity breeds deadlock.

LLM systems: Document which system component owns each token-slot allocation. If slots are managed by a scheduler, ensure the scheduler’s design is auditable and its decisions are logged. If slots are held by an inference worker, ensure the worker’s lifecycle (startup, failure, preemption) is well-defined.

Actionable control: Generate resource-ownership matrices. Audit them quarterly. Update them when responsibilities change.

Critical Evaluation: Where Deadlock Theory Illuminates and Where It Reaches Limits

The mapping of OS-level deadlock theory onto supply chains, cloud platforms, and LLM systems is powerful. It is also incomplete — and acknowledging that gap matters more than pretending otherwise. The theory exposes circular dependencies and hold-and-wait patterns with unusual clarity; the four Coffman conditions hand engineers a diagnostic checklist that transfers across domains with minimal adaptation.

But a credible counterposition deserves attention. Operating-system deadlock is determined by algorithm acting on precisely defined resources. The “resources” in supply-chain, cloud, and LLM contexts? Often ambiguous, socially constructed, or subject to regulatory change that no scheduler can anticipate. Coffman et al. (1971) assumed a closed system with enumerable resources. Real supply chains are open systems where new dependencies materialise at arbitrary times. Cloud-platform contention frequently traces to political risk rather than resource scarcity. LLM inference contention depends on workload distributions that are non-stationary — yesterday’s traffic pattern is tomorrow’s irrelevant baseline. The four-condition framework therefore serves best as a diagnostic lens, not a formal proof system, when applied outside the kernel.

Where the analogy reaches its limits is in the human and policy factors. Operating-system deadlock is determined by algorithm alone. Supply-chain deadlock involves vendor decisions, regulatory constraints, and social-engineering vulnerability. Cloud-platform deadlock mixes technical control with geopolitical factors that no algorithm can resolve. These domains require policy and human judgment in addition to systems theory.

The evidence base for this synthesis spans three peer-reviewed supply-chain incident reports, cloud-platform documentation, LLM scheduling research, and classical OS texts dating to Dijkstra (1962-1965) and Coffman et al. (1971). The mapping to real incidents is evidence-grounded. The applicability of preventive controls is domain-specific: not all Coffman conditions can be broken in all domains, but each domain benefits from explicit analysis using this framework even if perfect prevention is unattainable.

The synthesis is qualitative by design and does not claim incident-impact quantification in this document. Metrics such as host compromise counts, downtime windows, queue latency distributions, and remediation labor-hours are essential for severity ranking, but those measures require controlled access to incident telemetry that is out of scope for this cross-domain theory paper.

Closing Discussion: Toward Resilience-Aware Design

More than five decades after Coffman, Elphick, and Shoshani formalised the conditions for deadlock — building on Dijkstra’s foundational work on semaphores, the Banker’s algorithm, and the dining philosophers problem — the same structural patterns keep surfacing in systems that nobody thinks of as “concurrent.” A supply-chain developer does not conceive of package publication as a mutual exclusion problem. Why would they? A cloud-platform architect does not frame regional partnerships as a resource-allocation puzzle. An LLM inference engineer may never map token slots to semaphore theory until the queue locks up at 3 a.m.

And yet. Each domain benefits from acknowledging this structure explicitly. Deadlock is not confined to low-level threading code. It is a structural pattern that emerges whenever resources are finite, threads compete for them, and acquisition order is not globally constrained. The corollary cuts deeper than it first appears: mitigation strategies that OS researchers developed for the kernel apply with surprising fidelity to arbitrarily higher levels of abstraction.

This article has converted Coffman’s four conditions into a diagnostic and preventive framework for three domains: supply chains, cloud platforms, and LLM systems. Each domain presents versions of the same four conditions. Each can apply modern scheduling, preemption, and spooling techniques to break the cycle. The gains are operational: reduced incident severity, faster recovery, and more predictable resource behavior.

The lessons are ordered from most general to most specific, permitting teams to apply them selectively. Lesson 1 (make circular wait visible) is universally applicable. Lesson 4 (priority aging) requires explicit scheduling infrastructure. Teams without mature resource-accounting systems may find Lesson 10 (make ownership explicit) a better starting point than Lessons 3 and 8.

The underlying principle is consistent: design systems to prevent, detect, and recover from structural deadlock conditions. Teams that operationalize this principle can treat resource contention as a managed engineering variable rather than as an unpredictable outage source.

Detailed domain evidence is available in related articles: the Axios supply chain compromise, digital sovereignty and cloud fragmentation, large language models in practice, and data provenance in the ML lifecycle.

Questions on Deadlock and Contention

What are the four Coffman conditions, and why do they still matter for modern systems for deadlock?

Deadlock occurs when, and only when, all four of the following hold simultaneously: mutual exclusion (a resource is held exclusively by one thread), hold and wait (a thread holds one resource while requesting another), no preemption (held resources cannot be forcibly reclaimed), and circular wait (a cycle exists where each thread waits for a resource held by the next). Eliminating any single condition prevents deadlock entirely. The full theoretical foundation and formal definition appear in the Understanding Deadlock section above.

How does deadlock theory map to software supply chain security failures?

Supply chain attacks exhibit all four Coffman conditions at the credential and dependency level. A maintainer credential enforces mutual exclusion over package publication; a compromised developer holds a build environment while waiting for npm packages (hold and wait); once a malicious version is downloaded it cannot be universally recalled (no preemption); and transitive dependency cycles create circular wait across build graphs. The Axios npm supply chain compromise article reconstructs a confirmed March 2026 incident through this exact framework.

How do deadlock and starvation differ in distributed and AI-serving systems?

Deadlock is a cycle in which no involved thread can make progress: every waiting thread holds a resource that another waiting thread needs, so the entire set is permanently blocked. Starvation is a state in which one specific thread is indefinitely denied access while other threads do make progress. Round-robin and first-come-first-serve scheduling prevent starvation; resource ordering, atomic acquisition, and the Banker’s algorithm prevent deadlock. Both pathologies appear in LLM inference queues and software supply chains.

Which controls most effectively prevent deadlock in distributed production systems?

Four primary strategies correspond directly to breaking the four Coffman conditions. First, enforce a total order on resource acquisition to eliminate circular wait. Second, require atomic multi-resource acquisition or full release before requesting new resources to eliminate hold and wait. Third, implement preemption authority so the system can forcibly reclaim a blocked resource. Fourth, run cycle-detection on the live resource-request graph and reject configurations that would complete a cycle before they execute. Lessons 1 through 3 in this article cover practical implementations for supply chains, cloud platforms, and LLM inference systems.

What is priority aging, and how does it apply to LLM inference scheduling for deadlock?

Priority aging is a scheduling technique in which a waiting thread’s effective priority increases incrementally over time, ensuring low-priority requests are eventually scheduled even when higher-priority requests arrive continuously. It prevents indefinite starvation while preserving responsiveness for high-priority work. Applied to LLM inference queues, priority aging ensures that batch offline jobs are not permanently blocked by interactive user requests, which is the scenario described in the LLM Inference and Token Schedulers section.

How can Coffman-condition analysis improve LLM inference and token-slot design for deadlock?

Token slots in LLM inference are mutual-exclusion resources: exactly one inference request occupies a decoding slot during autoregressive generation. In a multi-model pipeline, if Request A holds a slot on Model-X while waiting for Model-Y, and Request B holds Model-Y while waiting for Model-X, all four Coffman conditions hold and a deadlock can form. Prevention requires either a global model-acquisition ordering (always acquire Model-X before Model-Y) or full-release semantics, where Request A must complete and release all slots before acquiring resources on a second model. Lessons 1 and 2 address both strategies.

Technical Appendix

Concurrency Term Reference

Author and Source Credibility

This article is authored by Zenith Law and synthesises findings from foundational academic literature on concurrency theory, including Dijkstra’s seminal work on mutual exclusion, Coffman et al.’s deadlock conditions taxonomy in ACM Computing Surveys, and established OS textbooks by Silberschatz and Tanenbaum. The cross-domain mappings to supply-chain security, cloud platforms, and LLM inference scheduling draw on the SLSA v1.0 framework specification alongside these classical computer science sources.

Appendix Table of Contents

- Author and Source Credibility

- Citability Snapshot

- Authoritative Reference Set

- Concurrency Term Reference

Citability Snapshot

| Metric | Value | Citability value |

|---|---|---|

| Cross-domain contexts mapped with Coffman logic | 3 | Shows theory portability across domains |

| Coffman conditions used in analysis | 4 | Preserves formal deadlock completeness |

| Scheduling or prevention strategies highlighted | 6 | Supports actionable systems design takeaways |

| FAQ entries with prevention guidance | 6 | Improves answer-engine extraction depth |

Synthesis note: Deadlock prevention can be engineered by systematically breaking at least one Coffman condition in the targeted execution path.

Authoritative Reference Set

- NIST Cybersecurity Framework (

.gov) - CISA Secure by Design (

.gov) - CMU Software Engineering Institute (

.edu)

Concurrency Term Reference

Mutex (Mutual Exclusion): A lock that enforces exactly-one access to a resource. Only one thread can hold a mutex at a time.

Semaphore: A counter-based synchronization primitive. A semaphore with count N permits up to N concurrent accesses. Threads decrement the counter on acquire; if the counter would go negative, the thread waits.

Deadlock: A state in which a set of threads cannot proceed because each thread is waiting for a resource held by another thread in the set, and the wait cycle has no break.

Coffman Conditions: Four necessary and sufficient conditions for deadlock: (1) Mutual Exclusion, (2) Hold and Wait, (3) No Preemption, (4) Circular Wait. Deadlock occurs if and only if all four hold simultaneously.

Starvation: A state in which a thread is ready to proceed but is indefinitely denied access to a resource, even though the resource becomes available.

Preemption: The act of forcibly interrupting a thread and reclaiming resources it holds, typically to allocate them to a higher-priority thread.

Priority Aging: A scheduling technique in which a thread’s priority increases over time as it waits, eventually ensuring it will be scheduled even if higher-priority threads continue to arrive.

Round-Robin Scheduling: A scheduling algorithm that allocates a fixed time slice or token budget to each thread in turn, regardless of priority, ensuring no thread is starved indefinitely.

First-Come-First-Serve (FCFS): A scheduling algorithm that allocates resources in the order threads request them. Guarantees no starvation but can cause long tail latencies if early requests are slow.

Spooling: A technique in which producers write to an intermediate buffer (spool) and consumers read from the buffer, decoupling producer and consumer timing and preventing hold-and-wait cycles.

Resource Ordering: A deadlock-prevention technique in which a total order is imposed on resource acquisition. All threads must acquire resources in the same order, preventing circular wait.

Circular Wait: A cycle in the resource-request graph in which thread T₁ waits for a resource held by thread T₂, thread T₂ waits for a resource held by thread T₃, …, thread Tₙ waits for a resource held by thread T₁.

Hold and Wait: A condition in which a thread holds a resource while waiting for another resource. Breaking this condition prevents deadlock but requires either atomic multi-resource acquisition or forced release of all held resources before waiting.

Publication: 14 April 2026 License: Educational and research use. Substantive reuse requires attribution.

References

- [1]System Deadlocks, vol. 3, no. 2, pp. 67–78, n.d. doi: 10.1145/356586.356588. Accessed: 14 April 2026.

- [2]E. W. Dijkstra, Een algorithme ter voorkoming van de dodelijke omarming, 1964. Accessed: 14 April 2026.

- [3]E. W. Dijkstra, Cooperating sequential processes, in The origin of concurrent programming: from semaphores to remote procedure calls, pp. 65–138, Springer, 2002.

- [4]E. W. Dijkstra, Solution of a problem in concurrent programming control, in Pioneers and Their Contributions to Software Engineering: Conference on Software Pioneers, Bonn, June 28/29, 2001, Original Historic Contributions, pp. 289–294, Springer, 2001. Accessed: 14 April 2026.

- [5]E. W. Dijkstra, The structure of the “THE”-multiprogramming system, vol. 11, no. 5, pp. 341–346, n.d. doi: 10.1145/363095.363143. Accessed: 14 April 2026.

- [6]P. B. G. A. Silberschatz and G. Gagne, Operating System Concepts, 9th ed., Wiley, 2013.

- [7]SLSA, About SLSA: Supply-chain Levels for Software Artifacts, 2023. Accessed: 14 April 2026.

- [8]A. S. Tanenbaum and H. Bos, Modern Operating Systems, 4th ed., Pearson, 2015.

Continue Reading in This Series

These linked articles extend the same evidence trail and improve navigability for readers and search systems.