Support Vector Machine (SVM) benchmark results are only useful when read at class level, not just headline accuracy. Part 2 of this series analyzes UCI HAR outcomes, confusion flows, and geometry signals to show where SVM is strong, where it degrades, and why those patterns matter in practice.

Introduction

This second article in the SVM series focuses on one question: what do benchmark results actually say about model behavior once we move beyond aggregate accuracy? Using the UCI Human Activity Recognition dataset [10], we compare an RBF SVM pipeline against a Random Forest baseline and inspect class-level precision, recall, F1, confusion corridors, tuning stability, and PCA geometry signals.

If you have not read the conceptual foundation, start with Part 1: Margins, Kernels, and Core Algorithms. For system-level reliability analogies in resource contention, see deadlock and resource contention lessons.

Scope and Claim Classification

This benchmark-focused article separates claims into three classes:

- Run-confirmed findings report the measured outcomes for this HAR experiment setup.

- Interpretive synthesis explains likely geometric or operational reasons behind observed class behavior.

- Deployment recommendations propose practical controls for production monitoring and retraining.

Results are intentionally scoped to the dataset, feature pipeline, split assumptions, and tuning grid used in this run. They should inform transfer decisions, not be treated as universal rankings for all activity-recognition contexts.

Reference and Maintenance Note

Benchmark conclusions should be revisited when key dependencies, preprocessing contracts, or dataset distributions change. Re-run parity checks and class-corridor diagnostics after material library, feature-engineering, or data-governance updates.

Benchmark Setup and Reproducibility Boundaries

The benchmark design used:

- Subject-compatible train/test split consistent with HAR conventions.

- Feature scaling fit on training data and applied unchanged to test data.

- RBF SVM with one-vs-all decomposition and grid search over $C$ and $\gamma$.

- Random Forest baseline tuned under comparable CV protocol.

R and Python implementations were aligned by hyperparameter grid and fold logic to check language-level consistency ([7]; [8]; [9]; [13]).

Scope note: This is a single-dataset, single-task benchmark. It supports careful operational inference for sensor-based activity classification, not universal model ranking.

Aggregate Metrics: Useful but Insufficient

Top-level outcomes from this run:

| Metric | SVM | RF |

|---|---|---|

| Accuracy | 0.8829 | 0.9396 |

| Macro F1 | 0.8808 | 0.9370 |

| Kappa | 0.8595 | 0.9274 |

At first glance, RF is the stronger overall fit. In this run, that is supported by higher accuracy, macro F1, and kappa. The conclusion is still incomplete, because operational risk sits in specific class transitions, not in averages.

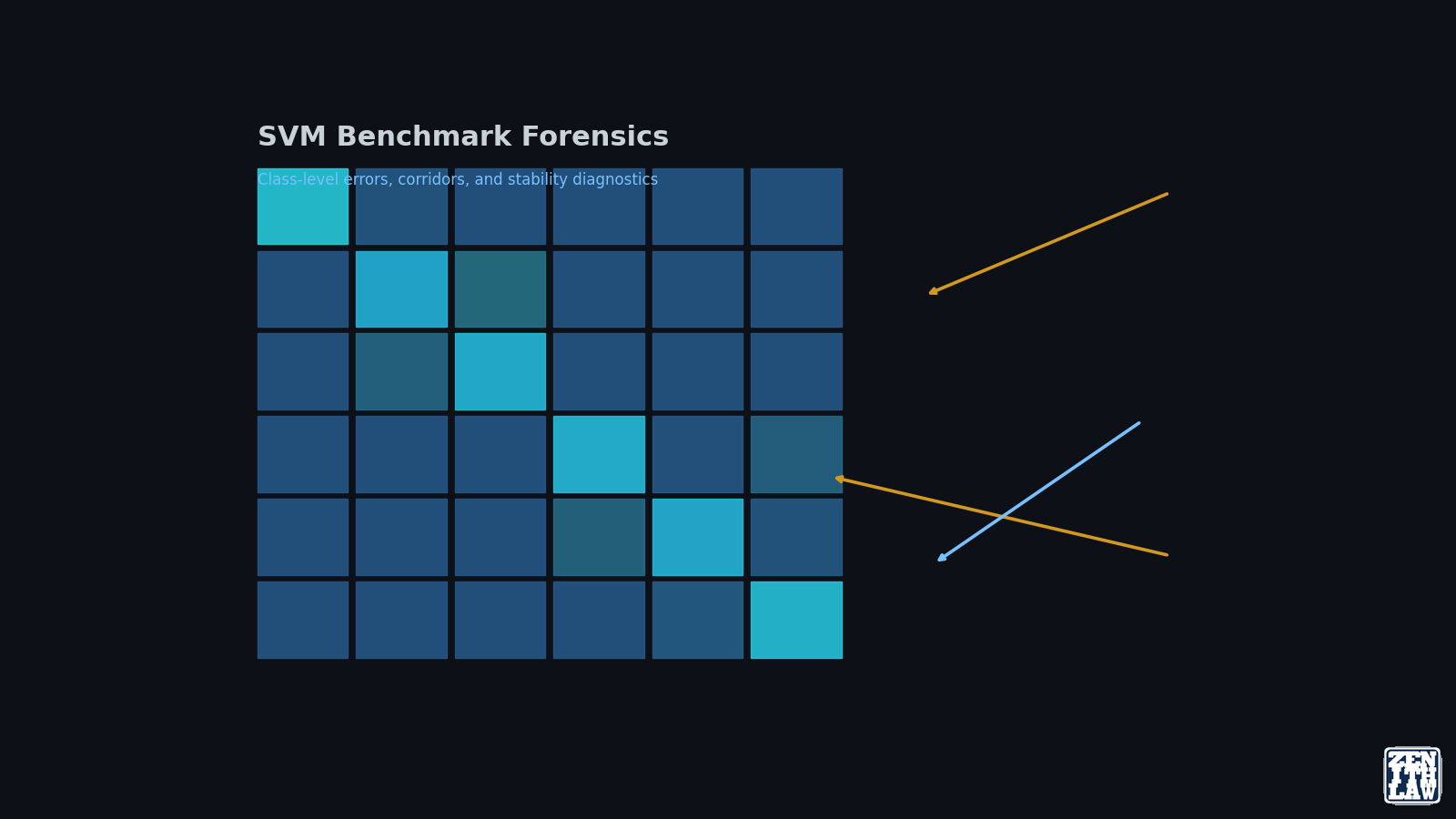

Class-Level Diagnostics: Where SVM Holds and Slips

Selected class profile:

- LAYING: near-ceiling SVM behavior.

- SITTING and STANDING: dominant static-class confusion corridor.

- WALKING-related subclasses: overlap-driven dynamic-class spillovers.

SVM class asymmetry in this run:

- SITTING recall is high while precision drops, signaling over-assignment pressure.

- STANDING precision is high while recall drops, signaling conservative boundary placement.

- WALKING_UPSTAIRS and WALKING_DOWNSTAIRS show structured bidirectional errors.

This pattern is coherent with kernel locality behavior and neighborhood overlap in transformed space ([11]; [12]; [9]).

Error Corridors, Not Random Noise

Largest SVM confusion transitions in this run:

- STANDING -> SITTING.

- WALKING -> WALKING_DOWNSTAIRS.

- WALKING_UPSTAIRS -> WALKING.

RF reduces the same corridors, which indicates shared ambiguity zones with stronger suppression by tree-based partitioning in this feature geometry.

Operational implication: treat these corridors as monitoring units. In production dashboards, pairwise transition counts are often more actionable than global F1.

Error Topology and Boundary Geometry

The confusion pattern indicates a geometry mismatch, not random instability. LAYING remains easy, while posture-adjacent and stair-adjacent classes concentrate most errors. This is consistent with the theory that margin methods perform best when local neighborhoods retain separability under the selected kernel width [1] [2].

The practical takeaway is that class-pair topology should be treated as a first-class artifact:

- Static posture pairs often require feature redesign around orientation and transition context.

- Gait subclasses often require windowing or frequency features that reduce local overlap.

- Aggregate metrics should be interpreted as summaries, not deployment decisions.

Why RF Wins Here Without Invalidating SVM Theory

This benchmark does not contradict large-margin foundations. It shows that tree ensembles can better absorb overlap-heavy feature neighborhoods in this dataset. SVM remains theoretically coherent and operationally useful, but not the strongest empirical fit for these class corridors in this run.

Model selection therefore becomes conditional:

- Use SVM when boundary control and disciplined regularization are priorities.

- Use RF when local overlap dominates despite careful SVM tuning.

- Keep both in the candidate set until class-level risk criteria are met.

Calibration and Decision-Threshold Implications

Where outputs feed thresholded actions, margin quality and probability quality must be audited separately. Platt-style calibration and pairwise coupling literature provide workable pathways, but calibration quality should be tested per class because corridor classes can remain overconfident even when macro metrics are strong [3] [4].

Robustness Checks Before Promotion

Before promoting this benchmark pattern into production policy, add four checks:

- Grouped validation by subject/entity to reduce split optimism.

- Stability analysis around near-optimal hyperparameter neighborhoods.

- Class-specific calibration diagnostics.

- Comparator retention over successive retraining cycles, especially when class frequencies drift.

Hyperparameter Stability Signal

SVM reached its best CV region around $\gamma=0.001$ and $C=50$, then softened at higher $C$. RF response across tested mtry values was flatter.

Interpretation:

- SVM delivered competitive quality but with a narrower stable region.

- RF delivered stronger quality with broader tuning tolerance.

Under frequent retraining, that difference changes operational burden and drift risk.

PCA Geometry Reading

PCA analysis showed:

- Rapid early variance capture.

- Slow tail compression for higher variance thresholds.

- Partial macro-separation of static versus dynamic regimes.

- Persistent local overlap among walking-related subclasses.

This explains why SVM can remain strong on easy global separations while repeating specific local confusions.

R and Python Parity: Why It Matters

Closely aligned results across this run’s R and Python pipelines, under matched preprocessing and split assumptions, suggest that observed performance differences were driven by model-data interaction rather than language artifacts. This is valuable for reproducible engineering:

- It reduces toolchain tribalism.

- It improves reproducibility across teams.

- It keeps methodological critique focused on data, assumptions, and evaluation design.

For a broader model-governance continuity perspective, connect this with data provenance and traceability methods.

Practical Conclusions from the Benchmark

- SVM remains strong and defensible in this task class, but not best-in-run here.

- RF is empirically superior for this dataset’s overlap-heavy boundary regions.

- Class-level diagnostics change model selection decisions more than headline metrics.

- Tuning stability should be treated as a first-class operational criterion.

Continue the Series

For implementation playbooks and deployment controls, continue with Part 3: Tuning, Monitoring, and Deployment Governance Playbook.

Frequently Asked Questions

Why is macro F1 not enough for SVM evaluation?

Macro F1 averages class behavior and can hide concentrated failure corridors that dominate real-world risk, especially in near-neighbor class pairs.

Does better RF accuracy mean SVM is a bad model?

No. It means RF is the better fit for this specific dataset geometry and operating objective; SVM can still be strong in other structured regimes.

How should I use confusion corridors in production?

Track the highest-frequency class transitions as explicit alert channels and tie retraining or threshold updates to corridor-specific drift.

Why compare R and Python if both use libsvm-style tooling?

Parity checks still matter because they expose silent preprocessing or CV mismatches and improve reproducibility across mixed-language teams.

Conclusion

The HAR benchmark shows a clear but nuanced result: SVM is robust and informative, while RF is stronger for the overlap profile in this run. The key outcome is diagnostic clarity about where and why each model family wins. Part 3 turns these diagnostics into a deployment governance playbook with explicit tuning, monitoring, and escalation controls.

References

- [1]Cortes and Vapnik, Support-Vector Networks, Machine Learning, vol. 20, no. 3, pp. 273–297, 1995. doi: 10.1007/BF00994018. Accessed: 17 April 2026.

- [2]Hearst et al., Support Vector Machines, IEEE Intelligent Systems and their Applications, vol. 13, no. 4, pp. 18–28, 1998. doi: 10.1109/5254.708428. Accessed: 17 April 2026.

- [3]Platt, Probabilistic Outputs for SVMs and Comparisons to Regularized Likelihood Methods, in Advances in Large Margin Classifiers, pp. 61–74, MIT Press, 1999. Accessed: 17 April 2026.

- [4]Wu, Lin and Weng, Probability Estimates for Multi-Class Classification by Pairwise Coupling, Journal of Machine Learning Research, vol. 5, pp. 975–1005, 2004. Accessed: 17 April 2026.

- [5]Noble, What Is a Support Vector Machine?, Nature Biotechnology, vol. 24, no. 12, pp. 1565–1567, 2006. doi: 10.1038/nbt1206-1565. Accessed: 17 April 2026.

- [6]Fan et al., LIBLINEAR: A Library for Large Linear Classification, Journal of Machine Learning Research, vol. 9, pp. 1871–1874, 2008. Accessed: 17 April 2026.

- [7]Chang and Lin, LIBSVM: A Library for Support Vector Machines, ACM Transactions on Intelligent Systems and Technology, vol. 2, no. 3, pp. 27:1–27:27, 2011. doi: 10.1145/1961189.1961199. Accessed: 17 April 2026.

- [8]Meyer and Wien, Support Vector Machines, R News, vol. 1, no. 3, pp. 23–26, 2001. Accessed: 17 April 2026.

- [9]scikit-learn, 1.4. Support Vector Machines, scikit-learn documentation, 2024. Accessed: 17 April 2026.

- [10]UCI HAR, Human Activity Recognition Using Smartphones, UCI Machine Learning Repository, 2012. Accessed: 17 April 2026.

- [11]Wikipedia, Kernel method, Wikipedia, The Free Encyclopedia, 2026. Accessed: 17 April 2026.

- [12]Wikipedia, Radial basis function, Wikipedia, The Free Encyclopedia, 2026. Accessed: 17 April 2026.

- [13]LIBSVM, LIBSVM: A Library for Support Vector Machines, LIBSVM project site, 2025. Accessed: 17 April 2026.

Continue Reading in This Series

These linked articles extend the same evidence trail and improve navigability for readers and search systems.

- Support Vector Machine: Practical Guide to Margins, Kernels, and Tuning

- Support Vector Machine Series Part 3: Tuning, Monitoring, and Deployment Governance

- Data Provenance in Machine Learning: Traceability, Graph Methods, and Governance Lessons

- Deadlock and Resource Contention: Operating Systems Theory Applied to Supply Chains, Cloud Platforms, and LLM Systems