Support Vector Machine deployment quality depends less on one-time benchmark scores and more on repeatable tuning, calibration, and monitoring controls. Part 3 provides an operational playbook for stable model delivery.

Introduction

This final article translates the SVM series into an execution playbook. Part 1 covered theory and fit boundaries. Part 2 covered benchmark diagnostics. Part 3 defines a practical workflow for tuning, calibration, monitoring, and governance so teams can sustain model quality over time rather than treating model release as a one-off event.

This article provides technical operational guidance only and does not constitute legal advice; compliance obligations vary by jurisdiction, sector, and use context.

Series links:

For broader AI lifecycle controls, connect this workflow with data provenance traceability and LLM operational governance patterns.

Scope and Claim Classification

This playbook uses three claim classes:

- Source-confirmed findings grounded in cited SVM literature and documented tooling behavior.

- Operational synthesis that combines those sources into repeatable workflow controls.

- Risk-management recommendations that support governance decisions but do not replace jurisdiction-specific legal or regulatory analysis.

The workflow is designed as a practical baseline. Teams should adapt thresholds, escalation gates, and retention policies to domain-specific risk tolerance and applicable legal obligations.

Reference and Maintenance Note

Production controls remain reliable only when they are continuously maintained. Revalidate thresholds, calibration behavior, drift triggers, and comparator performance on a regular cadence, and update runbooks when data contracts or tooling assumptions materially change.

Deployment Principle: Treat SVM as a Controlled System

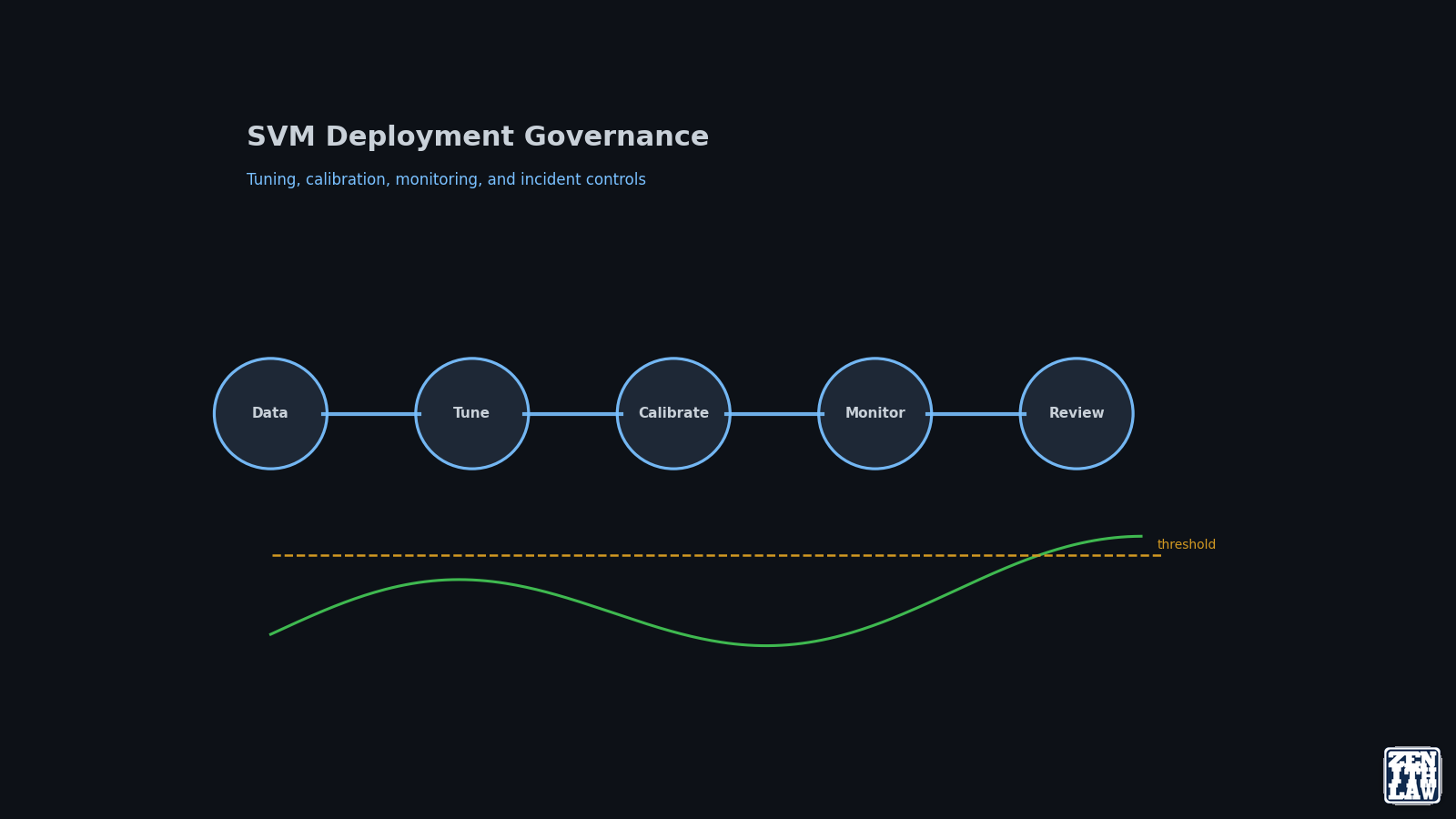

SVM quality in production depends heavily on control loops and not only on static hyperparameters. The most consistent teams formalize SVM operations as an iterative system:

- Data-contract checks.

- Tuning and calibration gates.

- Class-level monitoring.

- Drift-triggered retraining.

- Post-release audit and learning capture.

This pattern supports reproducibility and lowers institutional memory loss during handoffs.

Step-by-Step Tuning Workflow

1. Lock preprocessing contracts

- Fit scaling on train data only.

- Freeze transforms for validation and test.

- Version feature schema and missing-value strategy.

2. Build baseline ladder

- Linear SVM baseline.

- RBF SVM with log-grid tuning for $C$ and $\gamma$.

- Optional comparator (for example RF or linear large-scale classifier) when overlap risk is high [5].

3. Use multi-metric selection

Select by a constrained metric set, not a single score:

- Macro F1.

- Class-level recall floors.

- Kappa or agreement-strength metric.

- Pairwise confusion corridor counts.

4. Record stability, not only best score

Capture fold variance and near-best regions. If performance only exists in a narrow peak, treat the model as high-maintenance under retraining.

Calibration and Decision Reliability

Raw SVM margins are not calibrated probabilities by default. If downstream decisions use risk thresholds, add explicit calibration workflow ([2]; [3]).

Minimum calibration checks:

- Reliability by class, not only global.

- Threshold sensitivity under scenario perturbations.

- Drift impact on confidence intervals after retraining.

Monitoring Blueprint for Production

Track three layers.

Layer A: Data and feature drift

- Distribution shift on top features.

- Sensor or source availability changes.

- Scaling parameter drift indicators.

Layer B: Decision behavior

- Class frequency drift.

- Top confusion corridors.

- Probability-threshold override rates.

Layer C: Outcome and quality

- Macro and per-class recall trend.

- Error-cost weighted score for critical classes.

- Retraining delta versus previous stable release.

Governance Controls for Sustainable Operational Value

A benchmark delivers value once. A reusable governance artifact delivers value over repeated releases. For sustained operational reliability, disclose records required by applicable law, then share additional records where organizational policy permits, while preserving non-waivable user and consumer rights:

- Dataset and split protocol.

- Hyperparameter search space and selected region.

- Class-level confusion tables by release.

- Calibration artifacts.

- Known failure corridors and mitigations.

This shifts quality from isolated results to reproducible practice.

Deployment Readiness Gates Before Promotion

Before release, define non-negotiable gates that tie model behavior to operational risk:

- Minimum per-class recall thresholds for safety-relevant classes.

- Maximum tolerated confusion-corridor volume for known high-risk transitions.

- Calibration reliability bounds for threshold-driven actions.

- Drift budget limits that trigger rollback or constrained rollout.

These gates reduce the chance of approving models that look acceptable in aggregate but fail in classes that drive user harm or support cost.

Comparator Retention and Model-Risk Management

Production governance improves when at least one comparator model is retained in scheduled retraining. This avoids silent lock-in to a degraded inductive bias and preserves evidence for architecture changes when data geometry shifts.

A practical pattern is:

- Keep SVM and one non-SVM comparator in recurring evaluation.

- Track delta by class, not only global metrics.

- Require explicit rationale when retiring a comparator.

This aligns model operations with established reliability practice: maintain alternatives until evidence justifies consolidation.

Post-Incident Review Loop for Classifier Systems

When a corridor breach or calibration incident occurs, use a structured review loop:

- Reconstruct the data slice and preprocessing state for the incident window.

- Recompute class-level diagnostics under the prior and current model versions.

- Identify whether failure was caused by geometry shift, threshold policy, or pipeline drift.

- Document permanent controls (feature change, threshold change, retraining trigger, or rollback rule).

This review discipline converts incidents into measurable controls rather than ad hoc one-time fixes.

Model Selection Rule Under Operational Constraints

Use this pragmatic rule set:

- Choose SVM when boundary control, moderate scale, and interpretable optimization levers are priorities.

- Prefer alternative families when overlap-heavy neighborhoods persist after disciplined SVM tuning.

- Keep at least one comparator in routine retraining to avoid model lock-in.

- Escalate to human review when critical class recall falls below agreed safety or user-experience thresholds, with review procedures aligned to applicable legal and fairness requirements in the deployment jurisdiction.

Common Failure Modes and Preventive Actions

-

Failure mode: headline metric optimism. Action: enforce class-level acceptance gates.

-

Failure mode: unstable hyperparameter peak. Action: track near-optimal region width and retraining variance.

-

Failure mode: confidence misuse. Action: calibrate and audit threshold policies.

-

Failure mode: silent drift in corridor classes. Action: alert on pairwise transitions, not only aggregate metrics.

Operational Documentation Cadence

To keep user engagement sustainable and reduce cognitive burden for multidimensional topics:

- Publish theory, benchmark, and operations as separate installments.

- Keep each installment under 20-minute read target.

- Add explicit cross-links so you can enter at any level.

- Provide stable reference anchors and change logs between parts.

This cadence supports you regardless of background or time budget while preserving analytical depth.

Frequently Asked Questions

What is the single most important SVM deployment control?

Class-level monitoring with confusion-corridor tracking, because it reveals high-impact degradation earlier than aggregate accuracy.

When should I calibrate SVM probabilities?

Calibrate whenever scores are consumed as confidence or risk thresholds in downstream workflows; margin rankings alone are insufficient for probability-driven decisions.

How often should SVM models be retrained?

Use drift and corridor-trigger thresholds rather than fixed calendar frequency, then validate stability against the previous release before promotion.

How do I keep this useful beyond one benchmark?

Publish reproducible artifacts, versioned diagnostics, and post-release failure analyses so others can learn methods, not only final numbers.

Conclusion

SVM can remain a high-quality production option when teams operationalize it as a governed system: controlled preprocessing, evidence-driven tuning, explicit calibration, and class-level monitoring. Long-term value comes from transparent iteration and reproducible diagnostics, not from one benchmark snapshot.

References

- [1]Cortes and Vapnik, Support-Vector Networks, Machine Learning, vol. 20, no. 3, pp. 273–297, 1995. doi: 10.1007/BF00994018. Accessed: 17 April 2026.

- [2]Platt, Probabilistic Outputs for SVMs and Comparisons to Regularized Likelihood Methods, in Advances in Large Margin Classifiers, pp. 61–74, MIT Press, 1999. Accessed: 17 April 2026.

- [3]Wu, Lin and Weng, Probability Estimates for Multi-Class Classification by Pairwise Coupling, Journal of Machine Learning Research, vol. 5, pp. 975–1005, 2004. Accessed: 17 April 2026.

- [4]Noble, What Is a Support Vector Machine?, Nature Biotechnology, vol. 24, no. 12, pp. 1565–1567, 2006. doi: 10.1038/nbt1206-1565. Accessed: 17 April 2026.

- [5]Fan et al., LIBLINEAR: A Library for Large Linear Classification, Journal of Machine Learning Research, vol. 9, pp. 1871–1874, 2008. Accessed: 17 April 2026.

- [6]Chang and Lin, LIBSVM: A Library for Support Vector Machines, ACM Transactions on Intelligent Systems and Technology, vol. 2, no. 3, pp. 27:1–27:27, 2011. doi: 10.1145/1961189.1961199. Accessed: 17 April 2026.

- [7]scikit-learn, 1.4. Support Vector Machines, scikit-learn documentation, 2024. Accessed: 17 April 2026.

- [8]LIBSVM, LIBSVM: A Library for Support Vector Machines, LIBSVM project site, 2025. Accessed: 17 April 2026.

Continue Reading in This Series

These linked articles extend the same evidence trail and improve navigability for readers and search systems.

- Support Vector Machine: Practical Guide to Margins, Kernels, and Tuning

- Support Vector Machine Series Part 2: Benchmark and Error Forensics on UCI HAR

- Data Provenance in Machine Learning: Traceability, Graph Methods, and Governance Lessons

- Large Language Models in Practice: From the Transformer to the Present Frontier